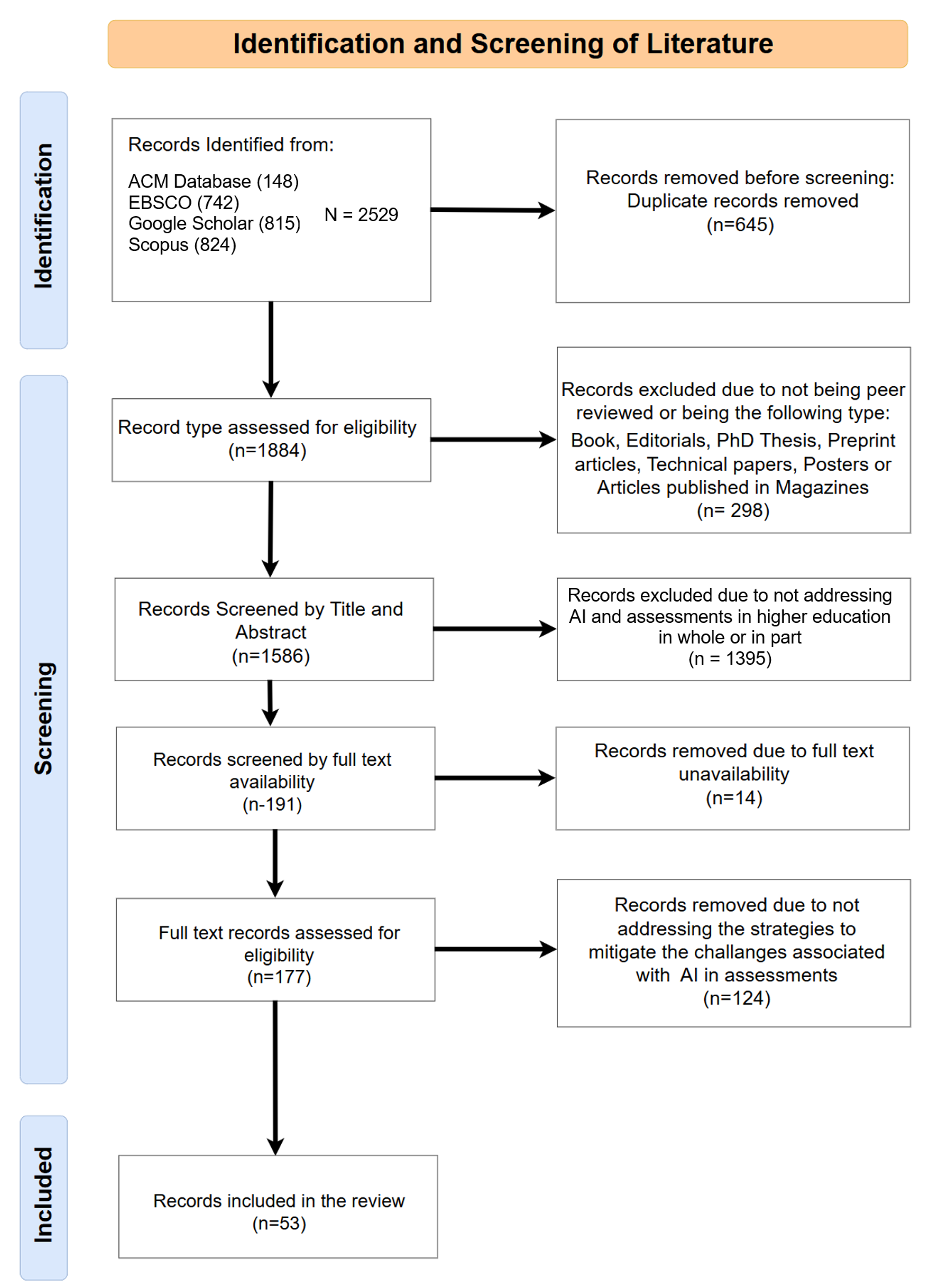

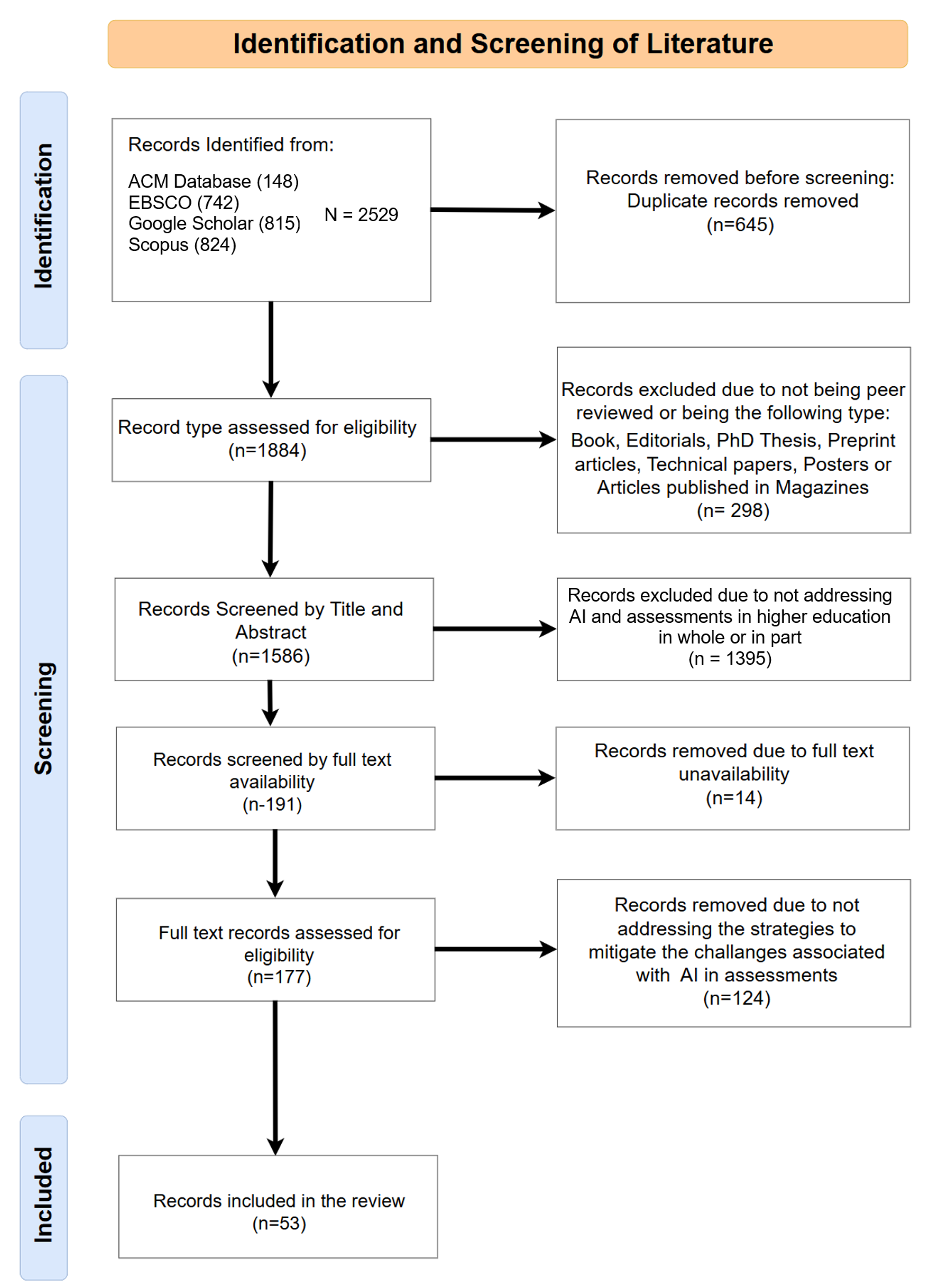

Figure 1: Literature Identification and Screening

Volume 9 | Number 1

Article type: Original Research (Extended report)

Navigating the AI-Education Nexus: Mitigating Academic Integrity Challenges in the Era of Generative AI

Patrick Buckland

Mary Immaculate College, Limerick, Ireland patrick.buckland@mic.ul.ie

https://orcid.org/0000-0001-7825-376X

Marian Carcary

Mary Immaculate College, Limerick, Ireland Marian.Carcary@mic.ul.ie

https://orcid.org/0009-0009-9707-5159

This work is licensed under Creative Commons Attribution 4.0 International

Recommended Citation

Buckland, P & Carcary, M. (2026) Navigating the AI-Education Nexus: Mitigating Academic Integrity Challenges in the Era of Generative AI. Irish Journal of Technology Enhanced Learning, 9(1). https://doi.org/10.22554/3e0x1n51

Original Research (Extended Report)

Mary Immaculate College, Limerick, Ireland

https://doi.org/10.22554/3e0x1n51

Generative AI is reshaping teaching and assessment in higher education, but its capacity for misuse raises new concerns about academic integrity. This study examines approaches that higher education educators can take to safeguard honesty in students’ academic work while also harnessing AI to enrich learning and evaluation. Based on a systematic literature review (SLR) method, this study identified five interconnected strategic themes: (1) Policy and Guidelines for AI in Education; (2) AI Education and Training; (3) Attitudes towards GenAI in Education; (4) Transparency, Communication, and Engagement; and (5) Assessment Design and Format. These elements are integrated into a holistic conceptual framework that connects capability development, cultural transformation, and structural safeguards, and presents practical guidance to address key academic integrity challenges. Our findings show that isolated efforts by academics are not enough, and only an integrated, values-led approach can maintain assessment integrity, foster critical and creative skills, and prepare graduates for workplaces increasingly shaped by AI.

AI in Higher Education, Academic Integrity, Generative AI

Generative Artificial Intelligence (GenAI), specifically Large Language Models (LLMs) such as ChatGPT and Google Gemini, are becoming increasingly prolific, transforming the boundaries of machine-human interactions across almost all industries and sectors. In the context of higher education, GenAI is reshaping the paradigms of learning and creativity (Bennett & Abusalem, 2024), and in so doing it has garnered both positive and negative attention from academics worldwide (Klyshbekova & Abbott, 2024). While the literature highlights significant promise, it is important to acknowledge at the outset that many claims regarding the educational benefits of Generative AI remain speculative, reflecting a contested and rapidly evolving research space.

GenAI has raised doubts about the efficacy of traditional assessment methods, such as reports and essays. Until recently, these have been a cornerstone of higher education assessment as a means of evaluating student understanding, critical thinking, and mastery of subject knowledge (Cong-Lem, Tran, & Nguyen, 2024). LLMs can now easily produce text on almost any subject, which is almost impossible to distinguish from human-written text (Rahman & Watanobe, 2023). Educators are concerned that the ease and speed of AI-driven content creation will tempt students to bypass the learning process, instead relying on these tools for quick results (Shanto, Ahmed, & Jony, 2023), and potentially undermining student understanding, critical thinking, and creativity (Cong-Lem, Tran, & Nguyen, 2024). A real concern is that the misuse of AI tools will erode the legitimacy of higher education, resulting in undervalued academic awards and reputational damage (Mrabet & Studholme, 2023).

Despite these complexities, educators should not ignore the positive implications of AI on student learning and how it can enhance their teaching. To do so may risk making them less effective educators who fail to provide students with the skills and knowledge necessary for their future careers (Van Slyke, Johnson, & Sarabadani, 2023). Existing research highlights that while many educators recognise the potential benefits of bringing AI into the classroom, they are uncertain about where to begin (Bower et al., 2024). Those who have started their GenAI journey are faced with a fine balancing act between integrating AI to reap its positive impacts on teaching and learning, and between preserving academic honesty and the integrity of the learning process, underscoring a pivotal area that requires extensive research and discussion (Williams, 2024). Recent empirical evidence also cautions that poorly governed or uncritical uses of Generative AI may actively harm learning outcomes, highlighting the risks of integration without appropriate pedagogical guardrails (Bastani et al., 2025). Önal (2023) stresses that integrating multifaceted strategies into assessments that mitigate the risk of academic dishonesty is vital to maintaining confidence in the educational process and, in turn, fostering a culture of integrity within higher education institutions. To this end, this paper asks: What strategies can be implemented to address the challenges of maintaining academic integrity and mitigate the concerns of educators regarding the use of GenAI in assessments within higher education?

To answer this research question, we adopted a Systematic Literature Review (SLR) method, which offered a concept-centric examination of the current literature on GenAI in higher education. The findings from this review present a high-level categorisation of concepts addressing GenAI-underpinned academic integrity challenges, organised into 5 distinct but interrelated themes. We structured this paper as follows. In section two, we present a review of the current context of AI in higher education, outlining the benefits, risks and ethical challenges of GenAI for teaching, learning and assessment. Section three presents the SLR methodology. Section four outlines an integrated synopsis of the key findings uncovered from the SLR to address academic integrity challenges along with a discussion of their core features and associated practical guidance. Section five concludes the paper and suggests avenues for future research.

While this review centres on Generative AI, the literature occasionally references broader AI-enabled tools in education, including assistive technologies and analytics systems; these are distinguished where relevant to avoid conflating generative capabilities with other forms of educational AI. Although the use of AI in education (AIEd) is not a new phenomenon, ongoing developments in LLMs have unsettled the education landscape and resulted in a contested, speculative field of study. GenAI’s proliferation has presented educators with a double-edged sword (Talaue, 2023), offering immense potential to positively transform teaching and learning processes while simultaneously threatening academic integrity and current learning paradigms (Gill et al., 2024; Mao, Chen, & Liu, 2023).

AI innovations promise a host of benefits to both students and educators (Le & Metzger, 2024). Due to their sophisticated adaptive learning capabilities, AI can enhance student learning outcomes and engagement by personalising the learning experience to individual learning styles, offering interactive and dynamic learning (Bennett & Abusalem, 2024; Cotton, Cotton, & Shipway, 2023; Harry & Sayudin, 2023; Meça & Shkëlzeni, 2024; Wang et al., 2023). This versatility is badly needed in modern educational settings, where diverse learning needs and modalities prevail (Gundu, 2023). The emergence of AI as a tutoring system or learning companion is an exciting application of AI’s use in education, offering students increased autonomy in their learning journeys (Schön et al., 2023) and a more authentic learning experience (Gamage et al., 2023). Chatbots can provide students with supplemental education and tailored, personalised instruction, explanations, and analogies, enabling them to more effectively navigate and retain complex concepts (Mogavi et al., 2024). By pinpointing areas where students struggle, AI can supply on-demand lecture notes, tutorials, study guides, and additional practice opportunities through targeted exercises, essentially offering a customised learning experience beyond the traditional classroom (Bennett & Abusalem, 2024; Di Placito & Mortensen, 2023). In essence, the AI serves as a dialogic partner with whom the student can hold a back and forth conversation until deeper understanding is achieved (Lau & Guo, 2023). 24/7 real-time feedback in this manner and independent study at their own pace enhance the student learning experience (Hsiao, Klijn, & Chiu, 2023; Williams, 2024).

Within the classroom, AI tools can also be employed to support collaborative problem solving and peer learning, supporting student critical thinking, creativity, teamwork skills, and greater student interactivity, engagement, and learning satisfaction (Mao, Chen, & Liu, 2023). AI can also make education more equitable and accessible to students learning in a different language (Kooli, 2023; Lau & Guo, 2023) or to those with learning disabilities or diverse learning needs by providing text-to-speech capabilities, visual aids, and other user friendly accessibility features (Meça & Shkëlzeni, 2024; Lacey & Smith, 2023). By adapting to the learner’s level of knowledge and ability (Mogavi et al., 2024), AI potentially provides less confident students with an opportunity to ask questions without the fear of judgement they may feel within the traditional classroom setting (Chan, 2023; Williams, 2024).

From the educators’ point of view, AI provides sweeping benefits, including substantial administrative support by streamlining operations, improving digital workflows, and allowing educators to focus on the art of teaching as opposed to administrative tasks. For example, AI can be used to support course preparation through the design of course syllabi and creation of lesson plans and course materials without any significant changes being required (Pokkakillath & Suleri, 2023; Krammer, 2023). AI also offers transformative pedagogical opportunities enabling educators to rethink and even transform their teaching practices; it has been described as a ‘teaching assistant’ (Krammer, 2023), serving as instructor, translator, data source, dialogic partner, and so on (Gundu, 2023). AI acts as an assessment aid to educators through, for example, the construction of assessment questions, quizzes, problem sets, and learning scenarios; the creation of a broader variety of assessment types; and the development of adaptive contextualised assessments that provide real-time student feedback (Chan, 2023; Mrabet & Studholme, 2023). Grading efficiency is enhanced through development of rubrics, plagiarism checks, and automated assessment scoring which in the European context must be considered in light of the EU Artificial Intelligence Act (EU, 2024), emphasising human oversight, transparency, and safeguards for high-risk educational uses (Bower et al., 2024; Moorhouse, Yeo, & Wan, 2023). AI also supports improved predicting of student academic performance and enhanced monitoring of learner progress thereby enabling more timely intervention and targeted support for those students at risk (Schön et al., 2023; Bower et al., 2024). Such benefits help reduce the administrative burden placed on educators enabling them to focus on innovating their pedagogical practices and more quality interactions with students (Meça & Shkëlzeni, 2024; Önal, 2023; Pokkakillath & Suleri, 2023).

While the potential benefits of AIEd are notable, there are also considerable challenges. Educators are concerned that students may use these tools to bypass the learning process by opting for quick results over genuine understanding (Sweeney, 2023). GenAI has brought with it the risk that reliance on AI in academic learning could lead to diminished student motivation, critical thinking skills, writing skills, creativity, and in-depth understanding (Chan, 2023; Duah & McGivern, 2024; Oravec, 2023). There is a risk that the educational journey becomes more about generating content that meets certain criteria rather than fostering a deeper, more meaningful engagement with the subject matter (Kirwan, 2023). There is also a danger that students accept the output from AI tools as being the objective truth. The ‘AI hallucination phenomenon’ is widely discussed in the literature, whereby AI offers, as fact, factually incorrect or misleading information in a convincing manner (Mrabet & Studholme, 2023; Mao, Chen, & Liu, 2023). Further, AI learns from multiple data sources which are selected and shaped by humans. Thus, where societal biases, population biases, representative biases or other misinformation or inaccuracies are encoded in the AI system, these may be assimilated and reproduced by AI (Blackie, 2024; Kooli, 2023), thereby potentially negatively impacting a student’s worldview.

The prominent issue highlighted in the literature appears to be the ethical challenge of maintaining academic integrity in the face of AI-assisted content creation (Cotton, Cotton, & Shipway, 2023; Gamage et al., 2023; Mao, Chen, & Liu, 2023; Liu et al., 2019). The literature reveals a complex landscape where the burgeoning capabilities of AI tools intersect with the foundational principles of academic integrity. LLMs have capabilities that allow the production of sophisticated, high-quality essays, reports, and other forms of assessment output in a fraction of the time it would take a student to write them, consequently raising significant concerns about plagiarism, contract cheating, and the authenticity of student work (Moorhouse, Yeo, & Wan, 2023). These concerns are compounded by the fact that educators cannot prove definitively that a student has used AI to cheat in a written assessment despite the availability of AI detection tools such as GPTZero and Turnitin. Although AI detector tools are helpful, they can incorrectly attribute human-written content to AI (Moorhouse, Yeo, & Wan, 2023; Perkins et al., 2024).

Some students utilising AI tools to produce high-quality assessments may gain an unfair advantage over those not adopting the technology, perhaps due to inequity of access across marginalised or disadvantaged groups, further compromising the integrity of academic assessments (Chan, 2023; Moorhouse, Yeo, & Wan, 2023). The concerns surrounding academic integrity and the potential for the undermining of vital learning and critical thinking skills posed by AI use if left unchecked could erode trust in the education system and its outputs, with broad implications for the value of academic awards and the credibility of educational institutions (Bennett & Abusalem, 2024; Eke, 2023; Moorhouse, Yeo, & Wan, 2023).

This study adopted a Systematic Literature Review (SLR) approach to explore the strategies educators can implement to address the challenges of maintaining academic integrity and mitigate their concerns regarding the use of GenAI in traditional assessments within higher education. An SLR is a labour-intensive method that presents a methodical synthesis of the current literature on a particular topic or research question (Carcary, Doherty, & Conway, 2018). The SLR approach is highly rigorous, following prepared criteria and protocols to search the literature, and identify, select, appraise, and summarise all relevant studies (Gundu, 2023). The method aims to minimise bias and provide a reliable summary of the evidence. By following a structured and rigorous process, systematic reviews ensure that the findings are reproducible and can be critically appraised by others (Shaffril, Samsuddin, & Samah, 2020).

The records included in the SLR addressed the strategies required to maintain academic integrity and mitigate educator concerns regarding GenAI use in higher education assessments. Inclusion criteria are outlined in Table 1.

| Criterion | Inclusion | Exclusion |

|---|---|---|

| Literature Type | Peer-reviewed journals, conference proceedings, book chapters, grey literature | Books, editorials, PhD theses, preprint articles, technical papers, posters, articles published in magazines |

| Content | Discusses AI or chatbots with regards to higher education assessments | Does not discuss AI or chatbots with regards to higher education assessments |

| Language | English | Non-English |

| Time Frame | 2022-2024 | Before 2022 |

| Subject Area | AI chatbots and written assessments | Neither AI chatbots or written assessments |

The selected databases included ACM Digital Library, EBSCO, Scopus, and Google Scholar. A primary Boolean search string was developed that covered the key concepts of artificial intelligence, higher education, and assessments, and three secondary Boolean search strings that addressed (1) academic honesty, (2) assessment design/policies, and (3) rethinking assessment design. The secondary strings were systematically combined with the primary string in different combinations (ref. Table 2).

| Concept | Search String |

|---|---|

| Primary Search String | “artificial intelligence” OR ai OR ChatGPT OR chatbot* OR “large language model*” OR llms AND “higher education” OR university OR “third level” AND exam* OR (assessment* OR “written assessment*”) OR essay* OR report* |

| Academic Honesty | “academic integrity” OR “academic dishonesty” OR “academic misconduct” |

| Assessment Design/Policies | strategy* OR guideline* OR policy OR framework* OR “assessment design” OR “assessment strategy” |

| Rethinking Assessment Design | rethink* OR reassess OR re-evaluate |

In total, we identified 2,529 pieces of literature. 645 duplicate records were removed from the initial search total. The screening phase was divided into several steps. The first step removed any records that were not peer-reviewed journal articles, conference proceedings, book chapters, or grey literature. The second step screened the remaining 1,586 records by the relevancy of their title and abstract to the research question. Step three screened the remaining 191 records based on the availability of their full-text manuscripts. The final step screened the remaining records by the relevance of the full text to the research question, resulting in 53 pieces of literature for analysis (Figure 1).

Figure 1: Literature Identification and Screening

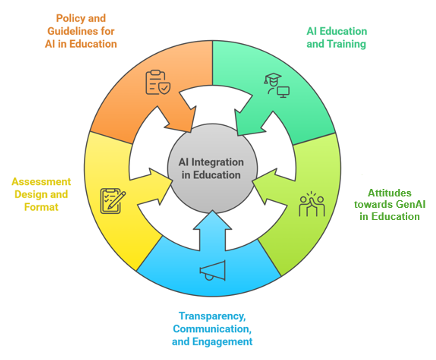

Findings from the SLR resulted in the distillation of five interrelated themes that together are essential for creating a sustainable assessment strategy in the era of GenAI integration. Themes relating to AI education and training, and transparency, communication, and engagement are seen as foundational, without which the other strategies in this review could not effectively be implemented.

Policy and Guidelines for AI in Education

AI Education and Training

Attitudes towards GenAI in Education

Transparency, Communication, and Engagement

Assessment Design and Format

Figure 2: Sustainable Assessment Strategy in the Era of GenAI Framework (This figure was generated with the assistance of Napkin.ai. The authors reviewed and edited the figure and take full responsibility for its content.)

Throughout the literature, clear, thoughtful, practical, and adaptable policies around the use of GenAI in academic settings are viewed as essential for guiding its responsible and effective integration into higher education. These policies need to carefully balance maintaining academic integrity with making the most of AI’s potential to support teaching and learning (Meça & Shkëlzeni, 2024; Oravec, 2023; Williams, 2024). Higher education institutions globally are actively engaged in the development or revision of existing academic integrity frameworks to specifically address the multifaceted implications posed by GenAI (Luo, 2024). The literature agrees that a central underpinning of developing effective policy and guidelines is the fundamental need to uphold academic integrity, which necessitates clarifying the boundaries of appropriate AI usage and effectively tackling the potential for AI-facilitated plagiarism and academic misconduct (Cotton, Cotton, & Shipway, 2023). However, these policies should also seek to achieve a delicate equilibrium between mitigating academic dishonesty and fostering its ethical integration as a valuable tool to enhance student learning and prepare graduates for an increasingly AI-driven world (Oravec, 2023). The literature argues that policy and guidelines should address the following elements:

Educators must be explicit when defining acceptable and unacceptable AI use in line with institutional policy, which must delineate academic misconduct beyond traditional plagiarism (Meça & Shkëlzeni, 2024). Course handbooks and policy documentation should be accessible and ensure expectations are transparently communicated (Luo, 2024; Talaue, 2023). Consistency across the institution, departments, and programmes is essential to ensure clarity and fairness for all stakeholders and reduce ambiguity for educators and students in addition to supporting a cohesive academic culture and reinforcing shared standards around AI use (Meça & Shkëlzeni, 2024).

As part of their policy, institutions should outline how students acknowledge the use of AI assistance in academic work (Bennett & Abusalem, 2024). Simply listing GenAI as a co-author or general source is not sufficient. The acknowledgement of AI involvement in student work should include sufficient detail to convey the extent of its contribution. Providing details on how and why AI tools were used, including the access date and a brief description of the prompts or queries involved, help clarify the nature of the assistance and differentiate legitimate use from potential academic misconduct (Eke, 2023; Kooli, 2023).

Educating students and staff about the capabilities and limitations of AI is perhaps the most important step for fostering responsible and informed GenAI use (Lacey & Smith, 2023; Eke, 2023; Chan, 2023) (see section 4.2). The recent roll out of the European Union's Artificial Intelligence Act (EU, 2025) marks an important step forward in how AI technologies are regulated across different sectors, including education. Effective in full from August 2nd, 2026, the EU AI Act mandates that providers and deployers of AI systems, such as HEIs, take measures to ensure a sufficient level of AI literacy among their staff and other individuals involved in the operation and use of AI systems on their behalf (EU, 2025). Although HEIs are legally required to adhere to this legislation, the act also provides a unique opportunity to create well-rounded policies that support the ethical, informed, and inclusive use of AI across teaching, learning, and research. Institutions should create strong AI literacy programmes that reflect the different needs and roles of staff and students (Chan, 2023). The act specifies that merely providing access to user manuals or documentation is insufficient; instead, institutions must offer structured training initiatives that effectively enhance AI literacy (Gill et al., 2024). By merging AI literacy into teaching and day-to-day operations, HEIs meet their legal responsibilities under the EU AI Act but also help build a culture of responsible, informed AI use. This approach supports academic integrity and encourages high ethical standards in how AI is used across institutions (Chan, 2023).

AI brings with it new equity and access issues (Kooli, 2023). One of the major concerns that follows the integration of GenAI into education and assessment is that of equity. Superior premium models that require paid subscriptions, like GPT 4o and Claude 4 Opus, as well as limited internet infrastructure, create barriers for students from lower socioeconomic backgrounds or under-resourced regions, exacerbating existing inequalities (Bennett & Abusalem, 2024; Mrabet & Studholme, 2023). Gaps in technological literacy further exacerbate this divide (Lau & Guo, 2023). Considering these issues from a policy standpoint, it is essential that institutional frameworks actively identify and respond to emerging disparities related to AI use (Chan, 2023).

Policies should clearly outline the responsibility of institutions to ensure that all students and staff who potentially work with AI tools, regardless of socioeconomic status or prior experience, have equal opportunities to use approved AI technologies (Krammer, 2023). This includes not only providing access to the tools themselves and the supporting infrastructure but also delivering the necessary supporting training (Lim et al., 2023). In the absence of such measures, gaps in digital skills and access may exacerbate existing educational inequalities. Furthermore, institutional policies must acknowledge the risk that AI systems may perpetuate existing societal biases. As such, they should include strategies for identifying, monitoring, and addressing these issues to support an equitable and ethical academic environment. Embedding equity into AI policy frameworks helps create a more just academic environment, where stakeholders are supported in developing the competencies needed for success in an AI-integrated future (Chan, 2023).

Seeing these policies as living documents helps keep them flexible and up to date as AI technologies evolve (Önal, 2023). This way, institutions can stay on top of new developments, address ethical challenges as they arise, and keep track of the changing ways AI is being used. By regularly reviewing policies and taking on board feedback from staff, students, and changes in technology, institutions can make sure their policies and guidelines stay relevant and in line with best practices and evolving regulations. By treating policies as something that can grow and change, institutions support a culture of ongoing improvement and build long-term resilience in how they manage AI.

Maximising the potential benefits while negating the potential harm of AI in educational settings must be the primary objective of educators and policymakers (Oravec, 2023; Williams, 2024). This objective cannot be realised if stakeholders are unaware of AI’s capabilities and limitations, especially in relation to how these tools might be used or misused in traditional assessment contexts. Effectively using these technologies requires that educators have at least a fundamental understanding of how GenAI operates and, crucially, the ability to engage with these tools (Kirwan, 2023). Because AI brings increased complexity to academic integrity, educators and students need training to effectively navigate its ethical use (Chan, 2023). This will enable those adopting the technology to make informed choices regarding its use, its potential to enhance learning, and the methods required to maintain academic integrity. This analysis emphasises these two primary facets of education and training which together build a strong foundation to foster critical engagement.

Being AI experts is not necessary to enjoy the benefits of GenAI; however, a fundamental competence in GenAI use is necessary for its successful and ethical integration into education. Training should address the technical aspects needed to use AI tools; understand how they function, what they are good at, and where they fall short; understand their biases, results, and their broader social impact; and how to resolve possible problems (Chan, 2023; Gill et al., 2024; Kirwan, 2023). Educators who can competently use AI are able to better understand how students might abuse these tools in assessments and use AI's capabilities to help create more creative, interactive, and possibly AI-resistant assessment strategies (Eke, 2023). Similarly, students with strong AI literacy can confidently use AI to support their learning processes, such as clarifying complex concepts or receiving feedback on drafts (Williams, 2024).

On the other hand, those who are less proficient in the fundamentals of AI may be reluctant to employ it in their classrooms and may maintain exaggerated negative biases against its capabilities, such as the belief that AI outputs are untrustworthy (Krammer, 2023; Lau & Guo, 2023; Talaue, 2023; Van Slyke, Johnson, & Sarabadani, 2023). The divide between those who use AI tools and those who do not may broaden disparities and inequalities in educational outcomes and professional efficacy and, importantly, may leave educators less equipped to address academic integrity concerns related to GenAI in assessments (Cong-Lem, Tran, & Nguyen, 2024).

The increasing pervasiveness of GenAI and its integration into traditionally non-AI applications raise the question of at what point a student’s work becomes unoriginal. Individual educators on a micro level and higher education institutions on a macro level may conceptualise originality differently, leading to ambiguity about what constitutes academic dishonesty in different contexts. This highlights the need for training to equip educators and students to navigate the evolving role of AI in academic writing (Duah & McGivern, 2024). Although AI literacy training is vital, equally important is guidance on how to apply AI literacy ethically and responsibly, ensuring that users understand not just the mechanics but also the implications of integrating GenAI into academic work. Doing so reduces the likelihood of accidental plagiarism or misrepresentation and can reassure those wary of adopting AI due to concerns about misconduct (Van Slyke, Johnson, & Sarabadani, 2023).

AI training is just the first step; there is a need to continually promote a culture of critical engagement with AI technologies, adopting a stance akin to President Reagan's 'trust but verify' during the Cold War (Sweeney, 2023). This mindset requires the development of advanced critical thinking abilities, including analysis, evaluation, and innovative problem-solving (Meça & Shkëlzeni, 2024). Equipped with these skills, students will be able to effectively scrutinise AI-generated outputs, detecting and addressing errors and identifying biases and misinformation (Pokkakillath & Suleri, 2023). A critical engagement mindset encourages all stakeholders to reframe the role of AI as a supplementary tool rather than a substitute for independent thinking and original work (Mogavi et al., 2024).

The approach to training, whereby the technical aspects of AI literacy and AI-specific ethical and academic integrity training come together, forms a foundation to build critical engagement with AI tools that prepares stakeholders to responsibly use it in a manner that upholds the values and goals of education (Cong-Lem, Tran, & Nguyen, 2024; Kooli, 2023).

The smooth integration of GenAI into teaching and assessment is influenced by the attitudes of educators and students (Bai, Zawacki-Richter, & Muskens, 2024; Lacey & Smith, 2023). Although resistance is common in the early stages of technological adoption, resistance to embracing GenAI is increasingly portrayed as impractical and counterproductive (Luo, 2024) and reinforces the call for a forward-thinking and open mindset. The literature suggests an increasing consensus that acceptance, understood here as a willingness to engage critically and pragmatically with GenAI rather than as uncritical endorsement or compulsory adoption, is a prerequisite for navigating the academic and social effects of GenAI; in the European context, such engagement is also shaped by binding regulatory obligations under the EU Artificial Intelligence Act, reinforcing the need for responsible, human-oversight-based use (Cancela-Outeda, 2024; Gill et al., 2024; Krammer, 2023; Sweeney, 2023).

The extent to which GenAI is successfully integrated in education is largely shaped by the openness of educators to incorporating the technology into their teaching and assessment practices (Bower et al., 2024). However, openness towards GenAI varies. For example, Lau & Guo (2023) found that some educators advocate for restrictions on GenAI to preserve core academic competencies and ensure academic integrity. Others embrace AI and see it as a way to innovate in their work, emphasising its capacity to personalise instruction, automate repetitive work, and deepen students’ interaction with technology.

Openness towards AI adoption is not a case of “all-or-nothing” (Chan, 2023; Kumar et al., 2024; Meça & Shkëlzeni, 2024). Some may be open to using GenAI in limited contexts, such as administrative tasks, while maintaining traditional approaches in areas such as assessment. Others may feel it is their responsibility to maintain distrust in the technology or may adopt a wait-and-see stance, influenced by institutional policies, peer practices, or their own technological comfort (Meça & Shkëlzeni, 2024).

While educator concerns about GenAI are reasonable and warranted as we navigate this new technology, some concerns may be based on flawed assumptions or limited understanding, showing the need for professional development to support educators in gaining a more informed view of the technology. This is especially important considering educators who are critical of AI may discourage students who are unsure about using the technology, placing them at an increased disadvantage relative to those who embrace it (Kirwan, 2023; Van Slyke, Johnson, & Sarabadani, 2023).

Students’ attitudes towards AI are also varied, although generally they appear to be more accepting of AI compared to educators (Duah & McGivern, 2024). Students take advantage of GenAI to help with tasks such as brainstorming, generating feedback, summarising material, and refining their writing. They largely regard GenAI as an aid to support academic development rather than a shortcut to avoid learning (Chan, 2023; Duah & McGivern, 2024, Schön et al., 2023). Some students compare the use of GenAI to a calculator; it offers support but still requires users to understand the material and provide accurate input (Bower et al., 2024; Duah & McGivern, 2024). Some students, however, express concerns regarding ethical risks, reliability issues, and the potential for both deliberate and accidental misuse (Kooli, 2023; Rahman & Watanobe, 2023). Doubts persist about the accuracy of AI-generated outputs, particularly when biases or factual inaccuracies are identified (Duah & McGivern, 2024). There is also an unease that stems from fears about how AI usage might be perceived. Luo (2024) notes that some students avoid using GenAI so as to avoid the stigma of being seen as less independent or capable.

Educators, as the leaders in the classroom, can have a significant impact on the tone and culture surrounding AI usage, significantly affecting students’ perceptions towards such tools (Chan, 2023). When instructors adopt supportive, knowledgeable, and transparent approaches, they tend to encourage responsible use. In contrast, unclear or punitive stances can discourage ethical engagement and heighten student anxiety (Mogavi et al., 2024). It is inevitable that educators will hold a wide gamut of perceptions and beliefs towards GenAI, which in turn may lead to mixed messages about AI usage that can lead to varied student experiences (Bower et al., 2024). Clear institutional policies and honest conversations within teaching practices can help mitigate any confusion (Moorhouse, Yeo, & Wan, 2023).

To effectively incorporate GenAI in higher education, it is clear that openness from both educators and students is key. Educators influence how these tools are perceived and used through their attitudes, guidance, and teaching practices, while students bring their own experiences and expectations to the table. When both groups are supported with clear policies, professional development, and open discussion, GenAI can become a key asset that enhances learning, promotes academic integrity, and prepares students for a technologically shifting world.

While the importance of developing policy around AI in education cannot be overstated, focusing narrowly on rules alone is not enough (Luo, 2024). The literature overwhelmingly advocates that in the context of assessment, genuine transparency, open communication, and stakeholder engagement are critical to addressing fears of misuse, reinforcing trust in assessment integrity, and ensuring fair and ethical practice (Eke, 2023).

A stumbling block to GenAI adoption in higher education assessments is a lack of trust, particularly around how AI makes evaluative judgements. Transparency is crucial to legitimising AI-assisted assessment by demystifying how algorithms operate, how they process student input, and how outcomes are generated (Chan, 2023; Williams, 2024). Yet, as Van Slyke, Johnson, & Sarabadani (2023) note, this is inherently challenging due to the opaque and fast-evolving nature of AI systems, often referred to as “black boxes” (Klyshbekova & Abbott, 2024). Institutions must prioritise transparency around if and how AI tools are used to grade, provide feedback, or flag concerns, including the criteria guiding AI decision-making, the level of human supervision, and how student data is protected. Williams (2024) emphasises the ethical responsibility in managing assessment data in compliance with regulations such as GDPR and secure data storage and anonymisation to maintain student confidence in AI-based assessment systems.

The role of GenAI in assessments must be clear to students and educators. Transparent and consistent communication is needed to address fears of misuse, correct misconceptions, and help prevent both intentional and unintentional breaches of academic integrity (Luo, 2024; Moorhouse, Yeo, & Wan, 2023). Educators must give explicit instructions as to whether the use of AI is allowed in assessments. Students need to know what is acceptable, what restrictions apply, and when and how these tools may be used with the goal of removing ambiguity (Moorhouse, Yeo, & Wan, 2023; Luo, 2024; Perkins et al., 2024). In assessments where AI use is allowed, students should clearly acknowledge usage by specifying which tool was used, how it was applied, and the nature of its contribution to the final submission (Perkins et al., 2024). This further promotes transparency and accountability (Kumar et al., 2024; Talaue, 2023), encourages the responsible use of AI, and helps students ensure their actions are consistent with institutional expectations (Williams, 2024; Talaue, 2023).

When designing or modifying policy that addresses the complexities of AI in assessment, institutions should seek to involve educators, students, IT staff, parents, and other relevant stakeholders. This collaborative approach brings together multiple perspectives, resulting in more inclusive, context-sensitive, and practical policies (Perera & Lankathilaka, 2023) and a shared sense of responsibility for how AI is implemented and understood in assessment practices (Eke, 2023; Kirwan, 2023; Luo, 2024). Educators contribute pedagogical insight, students highlight issues of fairness and usability, and IT staff offer guidance on technical feasibility and data privacy (Bennett & Abusalem, 2024). Institutions could pursue collaborative design workshops and iterative consultation processes to ensure that such policies are ethically sound and practically effective (Bai, Zawacki-Richter, & Muskens, 2024). When various stakeholders are genuinely involved in decisions about AI in assessment, institutions cultivate an environment of trust and adaptability, thereby reinforcing the legitimacy and credibility of assessment outcomes in the digital age (Perera & Lankathilaka, 2023).

GenAI presents exciting opportunities to rethink assessments, enhancing their effectiveness, fairness, and responsiveness to diverse student needs. GenAI has the potential to support educators in moving beyond traditional methods, embracing more personalised and adaptive approaches that promote deeper learning while upholding fairness and academic integrity (Cotton, Cotton, & Shipway, 2023).

GenAI provides the capability for educators to develop assessments that can be tailored to individual personalised learning needs (Mrabet & Studholme, 2023). It has been suggested that AI systems are capable of analysing student performance data to inform customised assessments that target areas of difficulty, offering a scaffolded approach to learning (Rahman & Watanobe, 2023; Schön et al., 2023). This approach has the added benefit of increasing student engagement and understanding by addressing specific knowledge gaps. A simple example would be educators generating questions that vary in complexity based on a student’s proficiency level, ensuring that assessments remain relevant and effective across a spectrum of learning abilities (Hsiao, Klijn, & Chiu, 2023; Moorhouse, Yeo, & Wan, 2023). In addition to customised assessments, GenAI facilitates instantaneous feedback on students’ responses, enabling them to identify errors and understand ideas in real-time (Chan, 2023).

Maintaining fairness in traditional assessment design continues to be a challenge for educators, particularly for students with diverse needs and backgrounds (Gundu, 2023). AI tools can help address these disparities by creating more inclusive assessment formats. For example, while not specific to GenAI, AI-powered text-to-speech and speech-to-text functionalities help students with visual or hearing impairments to participate fully in assessments, promoting accessibility and equity (Rahman & Watanobe, 2023).

GenAI is trained on vast datasets that reflect the human zeitgeist; however, this also includes various social biases and stereotypes. Institutions, when possible, must implement rigorous oversight and ensure the development of diverse and representative datasets in these tools (Williams, 2024). Despite the inherently biased nature of AI, they can be used to minimise biases from human graders by standardising the evaluation process to provide consistent and relatively objective scoring, particularly in tasks such as essay grading, where subjective judgments can vary.

A common issue found in the literature is the potential for students to use GenAI to generate polished yet unoriginal responses that bypass critical engagement and genuine understanding (Chan, 2023; Klyshbekova & Abbott, 2024; Oravec, 2023). Various AI-powered websites that can humanise AI-generated text further circumvent the already unreliable AI text detectors. To counteract this, the design of assessments must evolve. Educators are encouraged to prioritise formats that require higher-order thinking, creativity, and contextual application of knowledge, capabilities that remain difficult for AI to replicate (Sweeney, 2023). Oral presentations, collaborative group work, and problem-based learning (Newell, 2023; Van Wyk, 2024) are just a few examples that require student participation and original thought (Kirwan, 2023; Talaue, 2023). Another effective approach is incorporating reflective practice, which requires students to explain their reasoning, decision-making processes, and personal learning journeys. Although it is nearly impossible to design an AI-proof assessment (other than in-person invigilated exams), a thoughtful approach to assessment redesign can help in upholding academic standards and nurture essential human skills, safeguarding education’s intellectual integrity in the face of rapidly advancing AI capabilities.

One of the most useful benefits of GenAI is the ability to provide immediate feedback (Wang, 2023). Student inputs can be tracked in real time by AI, detecting learning gaps and providing prompt, targeted support, leading to continuous and responsive learning which significantly improves upon traditional feedback cycles that are often delayed and lack personalisation (Gamage et al., 2023; Kooli, 2023). By minimising the delay between a student's action and the corresponding response, AI reinforces concepts precisely when students are most engaged, boosting both understanding and memory retention. It also enhances formative assessment by continuously adapting to each learner’s performance in real time. Rather than offering a static snapshot of ability, adaptive systems respond to learners’ evolving needs by modifying both the difficulty and content of tasks (Moorhouse, Yeo, & Wan, 2023). This approach keeps assessments in step with the learner’s progress, offering a more accurate view of their development over time. While students benefit from immediate, actionable insights, educators gain access to detailed analytics that reveal patterns of progress and areas of concern (Bower et al., 2024). This data-informed perspective allows for more precise interventions, reducing the likelihood of students falling behind going unnoticed.

The growing influence of GenAI in higher education highlights the urgent need for institutions to treat its integration as a priority (Chan, 2023). Tools such as ChatGPT offer valuable opportunities to enhance student learning, reduce administrative workloads for educators, and diversify assessment practices (Krammer, 2023; Mrabet & Studholme, 2023; Pokkakillath & Suleri, 2023). At the same time, these technologies raise important concerns, including risks to academic integrity, unequal access among students, and the potential erosion of authentic learning engagement (Moorhouse, Yeo, & Wan, 2023; Talaue, 2023).

In response, this study proposes a comprehensive framework that brings together coherent policy development, AI literacy, a culture of openness, clear communication, and thoughtful assessment design (Cotton, Cotton & Shipway, 2023). One of the key insights from the literature is that isolated or one-size-fits-all approaches are unlikely to be effective. A holistic approach is needed, one that combines technical training with ethical guidance, connects policy to practice, and promotes a culture in which students and educators engage with AI critically and responsibly.

When institutions adopt an innovative and integrated strategy, they are better placed to uphold academic integrity while also embracing the benefits that GenAI offers for teaching and learning (Chan, 2023). Although consensus is building around the importance of these issues, there are limitations in the existing research. Many of the suggested strategies are relatively new and have not yet been thoroughly tested in varied educational settings. Further research is needed to explore how students and educators interpret and respond to these strategies in order to understand their practical impact and guide more effective implementation (Luo, 2024). In addition, student perspectives are under-represented in much of the literature (Chan, 2023). There is limited understanding of how students interpret institutional policies, how they experience the use of AI in their academic work, or how their behaviours and motivations are shaped by evolving norms around technology use (Gamage et al., 2023). Equity is also a significant concern as new technologies are introduced into education (Wang, 2023). While it is widely acknowledged that limited access to tools and training may disadvantage some student groups, there is little detailed evidence on how these gaps play out in practice or on the steps institutions are taking to address them.

An additional consideration relates to governance and regulatory compliance. While the themes found in this review offer direction for incorporating GenAI into instruction and assessment, their application must be in line with institutional governance frameworks and relevant legal requirements. This includes adhering to the General Data Protection Regulation (GDPR), taking data sovereignty into account when utilising third-party AI systems, and being in line with new regulatory frameworks like the European Union's Artificial Intelligence Act. Protecting student data, upholding institutional accountability, and encouraging the responsible adoption of AI in higher education all depend on AI-related initiatives adhering to these requirements (Hirpara et al., 2025).

Future research should aim to produce evidence that reflects the specific context in which AI is being implemented, supported by robust empirical data. There is also considerable value in cross-disciplinary and cross-institutional comparisons, which can help identify practices that are effective, adaptable, and responsive to the diverse demands of different educational environments. Furthermore, systematic evaluations of AI-related policies and assessment strategies are essential for guiding evidence-based decisions and ensuring that institutional responses are both practical and effective. Understanding how regulatory developments, such as the European Union’s AI Act, shape institutional practice will also be vital in developing strategies that are both compliant and forward-looking.

Ultimately, the question is not whether AI should be integrated into education, but how it can be implemented in ways that support academic integrity, enhance learning outcomes, and uphold the core mission of higher education. The most promising way forward is through an approach that is informed, inclusive and flexible. As institutions navigate the challenges and opportunities of the AI era, their ability to balance innovation with ethical responsibility will be central to their success. Achieving this balance will influence the effectiveness of their educational strategies and reflect their commitment to preparing students for a future where human and machine intelligence coexist.

Note: During the preparation of the manuscript, Generative AI was used for minor text editing, after which the authors reviewed the content as needed. We take full responsibility for the content of the publication and acknowledge that it is our own work.

Ajevski, M., Barker, K., Gilbert, A., Hardie, L., & Ryan, F. (2023). ChatGPT and the future of legal education and practice. The Law Teacher, 57(3), 352–364. https://doi.org/10.1080/03069400.2023.2207426

Bai, Y. H., Zawacki-Richter, O., & Muskens, W. (2024). Re-examining the Future Prospects of Artificial intelligence in Education in light of the GDPR and ChatGPT. Turkish Online Journal of Distance Education, 25(1), 20–32.

Bastani, H., Bastani, O., Sungu, A., Ge, H., Kabakcı, Ö., & Mariman, R. (2025). Generative AI without guardrails can harm learning: Evidence from high school mathematics. Proceedings of the National Academy of Sciences, 122(26), e2422633122. https://doi.org/10.1073/pnas.2422633122

Bennett, L., & Abusalem, A. (2024). Artificial Intelligence (AI) and its Potential Impact on the Future of Higher Education. Athens Journal of Education, 11(3), 195–212. https://doi.org/10.30958/aje.11-3-2

Blackie, M. A. (2024). ChatGPT is a game changer: detection and eradication is not the way forward. Teaching in Higher Education, 29(4), 1109–1116. https://doi.org/10.1080/13562517.2023.2300951

Bower, M., Torrington, J., Lai, J. W. M., Petocz, P., & Alfano, M. (2024). How should we change teaching and assessment in response to increasingly powerful generative Artificial Intelligence? Outcomes of the ChatGPT teacher survey. Education and Information Technologies. https://doi.org/10.1007/s10639-023-12405-0

Cancela-Outeda, C. (2024). The EU’s AI act: A framework for collaborative governance. Internet of Things, 27, 101291. https://doi.org/10.1016/j.iot.2024.101291

Carcary, M., Doherty, E., & Conway, G. (2018). An analysis of the systematic literature review (SLR) approach in the field of IS management. In Proceedings of the 17th European Conference on Research Methods in Business and Management (ECRM 2018) (p. 78). Academic Conferences and Publishing International.

Chan, C. K. Y. (2023). A comprehensive AI policy education framework for university teaching and learning. International Journal of Educational Technology in Higher Education, 20(1). https://doi.org/10.1186/s41239-023-00408-3

Cong-Lem, N., Tran, T. N., & Nguyen, T. T. (2024). Academic integrity in the age of generative AI: Perceptions and responses of Vietnamese EFL teachers. Teaching English with Technology, 24(1), 28–47. https://doi.org/10.56297/fsyb3031/mxnb7567

Cotton, D. R. E., Cotton, P. A., & Shipway, J. R. (2023). Chatting and cheating: Ensuring academic integrity in the era of ChatGPT. Innovations in Education and Teaching International, 61(2), 228–239. https://doi.org/10.1080/14703297.2023.2190148

Di Placito, M. L., & Mortensen, E. (2023). Applying AI efforts to student assessments: That is, alternative innovations! The Interdisciplinary Journal of Student Success, 2, 93–108. https://cdspress.ca/wp-content/uploads/2023/07/CALL-AI_MLDP-1.pdf

Duah, J. E., & McGivern, P. (2024). How generative artificial intelligence has blurred notions of authorial identity and academic norms in higher education, necessitating clear university usage policies. International Journal of Information and Learning Technology, 41(2), 180–193. https://doi.org/10.1108/ijilt-11-2023-0213

Eke, D. O. (2023). ChatGPT and the rise of generative AI: Threat to academic integrity? Journal of Responsible Technology, 13, 100060. https://doi.org/10.1016/j.jrt.2023.100060

European Union. (2025). Regulation (EU) 2024/1689 of the European Parliament and of the Council laying down harmonised rules on artificial intelligence (Artificial Intelligence Act), Article 4. https://artificialintelligenceact.eu/article/4/

Gamage, K. A., Dehideniya, S. C. P., Xu, Z., & Tang, X. (2023). ChatGPT and higher education assessments: More opportunities than concerns? Journal of Applied Learning & Teaching, 6(2). https://doi.org/10.37074/jalt.2023.6.2.32

Gill, S. S., Xu, M., Patros, P., Wu, H., Kaur, R., Kaur, K., Fuller, S., Singh, M., Arora, P., Parlikad, A. K., Stankovski, V., Abraham, A., Ghosh, S. K., Lutfiyya, H., Kanhere, S. S., Bahsoon, R., Rana, O., Dustdar, S., Sakellariou, R., & Buyya, R. (2024). Transformative effects of ChatGPT on modern education: Emerging Era of AI Chatbots. Internet of Things and Cyber-Physical Systems, 4, 19–23. https://doi.org/10.1016/j.iotcps.2023.06.002

Gundu, T. (2023). ChatGPT-Proofing: Redesigning assessment practices for E-Learning. European Conference on e-Learning, 22(1), 121–130. https://doi.org/10.34190/ecel.22.1.1888

Mogavi, R. H., Deng, C., Kim, J. J., Zhou, P., Kwon, Y. D., Metwally, A. H. S., Tlili, A., Bassanelli, S., Bucchiarone, A., Gujar, S., Nacke, L. E., & Hui, P. (2024). ChatGPT in education: A blessing or a curse? A qualitative study exploring early adopters’ utilization and perceptions. Computers in Human Behavior Artificial Humans, 2(1), 100027. https://doi.org/10.1016/j.chbah.2023.100027

Harry, A., & Sayudin, S. (2023). Role of AI in education. Interdisciplinary Journal of Humanities, 2(3), 260–268. https://doi.org/10.58631/injurity.v2i3.52

Hirpara, N., Weber, M., Szakonyi, A., Cardona, T., & Singh, D. (2025). Exploring the role of GenAI in shaping education. In International Conference on Intelligent Human Computer Interaction (pp. 121-134). Cham: Springer Nature Switzerland. https://doi.org/10.1007/978-3-031-88881-6_10

Hsiao, Y., Klijn, N., & Chiu, M. (2023). Developing a framework to re-design writing assignment assessment for the era of Large Language Models. Learning Research and Practice, 9(2), 148–158. https://doi.org/10.1080/23735082.2023.2257234

Kirwan, A. (2023). ChatGPT and university teaching, learning and assessment: some initial reflections on teaching academic integrity in the age of Large Language Models. Irish Educational Studies, 43(4), 1389–1406. https://doi.org/10.1080/03323315.2023.2284901

Klyshbekova, M., & Abbott, P. (2024). ChatGPT and assessment in higher education: A magic wand or a disruptor? The Electronic Journal of e-Learning, 22(2), 30–45. https://doi.org/10.34190/ejel.21.5.3114

Kooli, C. (2023). Chatbots in Education and Research: A Critical Examination of ethical implications and solutions. Sustainability, 15(7), 5614. https://doi.org/10.3390/su15075614

Kooli, C., & Yusuf, N. (2024). Transforming Educational Assessment: Insights into the use of ChatGPT and large language models in grading. International Journal of Human-Computer Interaction, 1–12. https://doi.org/10.1080/10447318.2024.2338330

Krammer, S. M. (2023). Is there a glitch in the matrix? Artificial intelligence and management education. Management Learning, 56(2), 367–388. https://doi.org/10.1177/13505076231217667

Kumar, R., Eaton, S. E., Mindzak, M., & Morrison, R. (2024). Academic Integrity and Artificial intelligence: An Overview. In Springer international handbooks of education (pp. 1583–1596). https://doi.org/10.1007/978-3-031-54144-5_153

Lacey, M. M., & Smith, D. P. (2023). Teaching and assessment of the future today: higher education and AI. Microbiology Australia, 44(3), 124–126. https://doi.org/10.1071/ma23036

Lau, S., & Guo, P. J. (2023). From “Ban it till we understand it” to “Resistance is futile”: How university programming instructors plan to adapt as more students use AI code generation and explanation tools such as ChatGPT and GitHub Copilot. In Proceedings of the 2023 ACM Conference on International Computing Education Research (ICER ’23 V1) (pp. 1–16). Association for Computing Machinery. https://doi.org/10.1145/3568813.3600138

Le, A. V., & Metzger, W. (2024). Assessing the impact and challenges of AI-based language models on the education sector: a proposal for new assessment strategies and design. Journal of Teaching in Travel & Tourism, 24(2), 167–178. https://doi.org/10.1080/15313220.2024.2311907

Lim, W. M., Gunasekara, A., Pallant, J. L., Pallant, J. I., & Pechenkina, E. (2023). Generative AI and the future of education: Ragnarök or reformation? A paradoxical perspective from management educators. The International Journal of Management Education, 21(2), 100790. https://doi.org/10.1016/j.ijme.2023.100790

Liu, X., Wang, S., Wang, P., & Wu, D. (2019). Automatic grading of programming assignments: An approach based on formal semantics. In Proceedings of the 2019 IEEE/ACM 41st International Conference on Software Engineering: Software Engineering Education and Training (ICSE-SEET) (pp. 118–127). IEEE. https://doi.org/10.1109/ICSE-SEET.2019.00022

Luo, J. (2024). A critical review of GenAI policies in higher education assessment: a call to reconsider the “originality” of students’ work. Assessment & Evaluation in Higher Education, 49(5), 651–664. https://doi.org/10.1080/02602938.2024.2309963

Mao, J., Chen, B., & Liu, J. C. (2023). Generative Artificial intelligence in Education and its implications for assessment. TechTrends, 68(1), 58–66. https://doi.org/10.1007/s11528-023-00911-4

Meça, A., & Shkëlzeni, N. (2024). Academic integrity in the face of generative language models. In Lecture Notes of the Institute for Computer Sciences, Social Informatics and Telecommunications Engineering (pp. 58–70). Springer Nature Switzerland. https://doi.org/10.1007/978-3-031-50215-6_5

Moorhouse, B. L., Yeo, M. A., & Wan, Y. (2023). Generative AI tools and assessment: Guidelines of the world’s top-ranking universities. Computers and Education Open, 5, 100151. https://doi.org/10.1016/j.caeo.2023.100151

Mrabet, J., & Studholme, R. (2023). ChatGPT: A friend or a foe? In Proceedings of the 2023 International Conference on Computational Intelligence and Knowledge Economy (ICCIKE) (pp. 269–274). IEEE. https://doi.org/10.1109/ICCIKE58312.2023.10131713

Newell, S. J. (2023). Employing the interactive oral to mitigate threats to academic integrity from ChatGPT. Scholarship of Teaching and Learning in Psychology, 11(3), 527–536. https://doi.org/10.1037/stl0000371

Önal, A. (2023). Rethinking foreign language assessment in the light of recent improvements in artificial intelligence. In H. Avara (Ed.), The art of teaching English in the 21st century settings (pp. 319–336). ISRES Publishing. https://www.isres.org/books/978-625-6959-33-0_15-12-2023.pdf

Oravec, J. A. (2023). Artificial intelligence implications for academic cheating: Expanding the dimensions of responsible human–AI collaboration with ChatGPT. Journal of Interactive Learning Research, 34(2), 213–237. https://www.learntechlib.org/p/222340

Perera, P., & Lankathilaka, M. (2023). AI in higher education: A literature review of ChatGPT and guidelines for responsible implementation. International Journal of Research and Innovation in Social Science, 7(6), 306–314. https://doi.org/10.47772/IJRISS.2023.762

Perkins, M., Roe, J., Postma, D., McGaughran, J., & Hickerson, D. (2023). Detection of GPT-4 generated text in higher Education: combining academic judgement and software to identify generative AI tool misuse. Journal of Academic Ethics, 22(1), 89–113. https://doi.org/10.1007/s10805-023-09492-6

Perkins, M., Gezgin, U. B., & Roe, J. (2020). Reducing plagiarism through academic misconduct education. International Journal for Educational Integrity, 16(1). https://doi.org/10.1007/s40979-020-00052-8

Pokkakillath, S., & Suleri, J. (2023). ChatGPT and its impact on education. Research in Hospitality Management, 13(1), 31–34. https://doi.org/10.1080/22243534.2023.2239579

Rahman, M. M., & Watanobe, Y. (2023). ChatGPT for Education and Research: Opportunities, Threats, and Strategies. Applied Sciences, 13(9), 5783. https://doi.org/10.3390/app13095783

Schön, E.-M., Neumann, M., Hofmann-Stölting, C., Baeza-Yates, R., & Rauschenberger, M. (2023). How are AI assistants changing higher education? Frontiers in Computer Science, 5, Article 1208550. https://doi.org/10.3389/fcomp.2023.1208550

Shaffril, H. A. M., Samsuddin, S. F., & Samah, A. A. (2020). The ABC of systematic literature review: The basic methodological guidance for beginners. Quality & Quantity, 55(4), 1319–1346. https://doi.org/10.1007/s11135-020-01059-6

Shanto, S. S., Ahmed, Z., & Jony, A. I. (2023). PAIGE: A generative AI-based framework for promoting assignment integrity in higher education. STEM Education, 3(4), 288–305. https://doi.org/10.3934/steme.2023018

Sweeney, S. (2023). Academic dishonesty, essay mills, and artificial intelligence: Rethinking assessment strategies. In Proceedings of the 9th International Conference on Higher Education Advances (HEAd’23) (pp. 965–972). Universitat Politècnica de València. https://doi.org/10.4995/HEAd23.2023.16181

Talaue, F. G. (2023). Dissonance in generative AI use among student writers: How should curriculum managers respond? In E3S Web of Conferences, 426, Article 01058. EDP Sciences. https://doi.org/10.1051/e3sconf/202342601058

Van Slyke, C., Johnson, R., & Sarabadani, J. (2023). Generative Artificial intelligence in Information Systems Education: Challenges, consequences, and responses. Communications of the Association for Information Systems, 53(1), 1–21. https://doi.org/10.17705/1cais.05301

Van Wyk, M. M. (2024). Is ChatGPT an opportunity or a threat? Preventive strategies employed by academics related to a GenAI-based LLM at a faculty of education. Journal of Applied Learning & Teaching, 7(1). https://doi.org/10.37074/jalt.2024.7.1.15

Wang, T. (2023). Navigating Generative AI (ChatGPT) in Higher Education: Opportunities and Challenges. In: Anutariya, C., Liu, D., Kinshuk, Tlili, A., Yang, J., & Chang, M. (eds) Smart Learning for A Sustainable Society. ICSLE 2023. Lecture Notes in Educational Technology. Springer, Singapore. https://doi.org/10.1007/978-981-99-5961-7_28

Wang, X., Wrede, S., van Rijn, L., & Wöhrle, J. (2023). AI-based quiz system for personalised learning. In Proceedings of the 16th Annual International Conference of Education, Research and Innovation (ICERI 2023) (pp. 5025–5034). IATED. https://doi.org/10.21125/iceri.2023.1257

Williams, R. T. (2024). The ethical implications of using generative chatbots in higher education. Frontiers in Education, 8, Article 1331607. https://doi.org/10.3389/feduc.2023.1331607

* Address for corresponding author patrick.buckland@mic.ul.ie↩︎